Linux Containers, Minus the Baggage

- Stephen Berard

- 3 days ago

- 9 min read

Atym brings Linux containers where Docker/Podman can’t

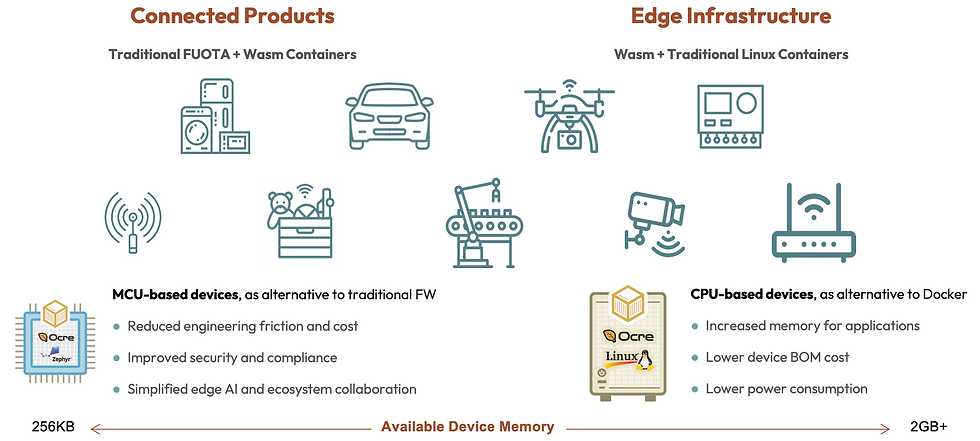

In my previous post, I introduced our new support for traditional Linux containers running alongside native WebAssembly (Wasm)-based containers on the Atym Runtime. Both formats can be stored in the same OCI-compliant repositories as Docker and Podman containers and our Wasm containers are portable to MCU-based devices with as little as 256KB of memory.

Atym Linux containers have roughly an order of magnitude less overhead than Docker or Podman. That immediately raises the question: how? At a high level, we do this by minimizing the on-device runtime surface to execution-critical components and shifting the remaining control-plane responsibilities to the Atym Hub. The result isn’t just a smaller footprint, but a streamlined deployment model.

That difference matters most at the hardware boundary. Atym is designed to support containers on classes of devices that traditional container platforms like Docker and Podman simply can’t address — for example, we can readily support Linux-based containers on devices that have just 128MB of total RAM.

Read on for how we do this and the architectural decisions that make Atym containers suitable for deployment across the billions of resource-constrained devices that Docker and Podman have left behind.

Atym’s target edge hardware

Most discussions of container efficiency assume PC or server-class machines. The debate centers on whether `dockerd` consumes 60 MB or 200 MB of RAM at idle, or whether layered storage costs tens or hundreds of megabytes of disk. Those differences matter—but they miss the more fundamental constraint.

In embedded environments, the dominant hardware profile sits below the practical operating range of mainstream container platforms. Support for lower-end 32-bit ARM SoCs has lagged, and production deployments typically assume 64-bit ARM systems with significantly more memory headroom. In practice, the minimum footprint for Docker on Linux is 256MB of memory and Docker-class environments are typically built around 64-bit ARM SoCs with at least 2GB of RAM. That requirement becomes a constraint on hardware selection and directly increases BOM cost. This is especially a consideration now as memory prices have recently doubled.

Atym’s hardware support picks up where these traditional container solutions fall short. A commonly available system representative of this class is the Raspberry Pi Zero: a single-core, 32-bit ARM SoC with 512 MB of RAM. Docker and Podman aren’t viable technologies for this class of device, but it is well within Atym’s operational range.

Atym is optimized for lightweight CPU-based edge infrastructure such as a IoT gateways, industrial controllers, switches, routers, and set top boxes to MCU-based embedded devices and systems including sensors, robots, drones, vehicles, cameras and beyond.

The relevant question, then, is not how much overhead Atym saves on a Docker-class machine, but what becomes possible when containers are viable on hardware where they previously were not.

The fine print on footprint

Understanding the composition of a conventional container stack is useful because it defines the baseline against which footprint and capability are typically evaluated—even when that baseline doesn’t exist on the target hardware.

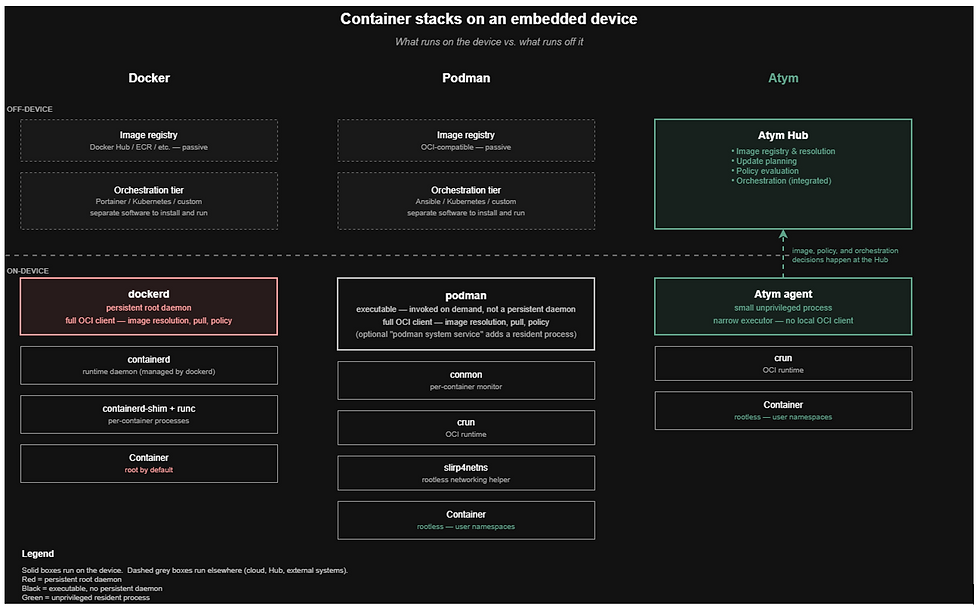

On Linux, a standard container stack is multi-layered. Docker, for example, relies on a persistent root-owned daemon (`dockerd`), which depends on `containerd` for lifecycle management and `runc` as the low-level OCI runtime. A typical Docker installation also includes a local image store, a build subsystem, a networking layer with plugins, and a feature-rich CLI—an environment designed for developer workstations and server-class systems.

Podman reduces some of this complexity. It eliminates the daemon, runs rootless by default, and uses `crun` or `runc` as the OCI runtime. However, it remains a full-featured local OCI client: the binary, configuration, image store, and networking stack all reside on each host.

In practice, the stack extends further. Operating containers at fleet scale requires orchestration, which is not built in with Docker/Podman. Teams layer on systems such as Portainer, Kubernetes, Argo CD, or custom agents—each introducing additional processes, storage, and operational complexity on the device.

For server-class environments, this architecture is appropriate. For resource-constrained edge devices, it is largely overhead—and on the class of hardware described earlier, significant portions of this stack are not viable at all.

A key architectural choice: centralizing the overhead

There are a number of commercially available platforms that support Docker containers on embedded devices. These Docker-derived container stacks can be adapted for devices with as little as 512MB of memory through careful optimization of the engine, OS, and update model. But in reality, a minimum footprint of 1GB is desirable.

The challenge is that these systems remain fundamentally node-centric, with each device running a full OCI client, managing its own images, and performing its own resolution and update logic. This functionality represents a tax that precludes support for resource-constrained devices that represent roughly two-thirds of the edge footprint by volume.

Atym supports resource-constrained devices by shifting much of that responsibility to the central controller. The Atym Runtime focuses on execution, while the Atym Hub handles control-plane concerns such as image resolution and deployment planning.

The result isn’t just a smaller footprint, but a different distribution of responsibility between device and control plane.

What we kept — and why crun?

The OCI specification defines two core contracts: an image format and a runtime. Any engine that implements the runtime contract can execute any image that conforms to the image format. That interface is what makes a container portable; everything else is tooling layered around it.

The Atym Runtime implements the OCI runtime contract while minimizing the on-device machinery required to execute it. At the lowest layer, we use `crun`, the same C-based OCI runtime used by Podman and CRI-O. `crun` is small, performant, actively maintained, and fully standards-compliant. It delivers equivalent container behavior to `runc` with a significantly smaller footprint, making it a straightforward choice for constrained environments.

Above `crun`, Atym exposes only the runtime surface that workloads actively exercise: image retrieval and verification, unpacking, container launch (namespaces and cgroups), networking, logging, and lifecycle management. This is the operational core of container execution—and it defines the boundary of what must reside on the device.

Everything remains OCI-compliant. Images built with Docker, Buildah, or other standard tooling run on Atym without modification, and existing registries—Docker Hub, GitHub Container Registry, Harbor, ECR, private registries, or the Atym-hosted registry—integrate directly. Existing build and deployment workflows carry over unchanged.

The guiding principle is simple: if a capability is required to execute workloads at runtime, it belongs on the device. If it exists to support development workflows, local tooling, or scenarios not relevant to resource-constrained systems, it is moved off the device. Maintaining this separation of concerns accounts for the majority of Atym’s footprint and complexity reduction.

What we left off the device (and why it’s not missed)

Much of the on-device footprint reduction comes from what we deliberately left out. Each omission reflects a specific design decision about what must run locally versus what can be handled elsewhere.

No additional background daemon in the Docker sense. We do include a lightweight on-device agent, but its scope is intentionally narrow: it communicates with the Atym Hub, supervises container execution, and reports status. It’s not a dockerd-scale service exposing a REST API, managing builds, or operating a full networking subsystem.

No local build toolchain. Image builds occur in CI or on developer workstations, then are pushed to a registry and deployed. The build subsystem included in Docker is only used at build time; replicating it across every device in a fleet adds unnecessary footprint and complexity.

No full-featured local CLI. Device interaction occurs through the Atym Hub, not through direct shell access. The device doesn’t need to expose a comprehensive local interface to represent its runtime state. This also helps increase the security posture of the device by preventing local tampering.

No Docker-specific networking stack. Atym doesn’t include overlay drivers, plugin frameworks, or integrated service discovery functions that are often not used for edge deployments anyway. We provide only the networking primitives required by embedded workloads.

No independent image management logic. Image resolution, layer selection, and update planning are coordinated centrally by the Atym Hub, rather than executed independently on each device.

These aren’t feature gaps; they are deliberate architectural omissions to optimize for resource-constrained hardware. In each case, the decision is the same: if a capability is unnecessary for runtime execution or can be handled more efficiently at the control plane, it’s excluded from the device stack.

Three design choices that change the math

In summary, these three architectural decisions account for the majority of the footprint and operational differences between Atym and traditional container platforms:

1. Control plane shifted off-device. In Docker and Podman-based deployments, each device operates as a full OCI client. When an image changes, the device is responsible for discovering updates, resolving tags, selecting layers, determining cache state, enforcing policy, and coordinating the pull. This introduces significant logic—and corresponding memory and storage overhead—on every device.Atym takes a different approach. Image resolution, update planning, and policy evaluation are handled centrally by the Hub. Devices receive explicit execution instructions and operate deterministically. The on-device agent remains narrow in scope, and the system becomes easier to reason about: a given deployment intent produces a consistent on-device state across the fleet.

2. Orchestration integrated by design. Docker and Podman stop at container execution. Fleet-level concerns—deployment, rollout strategy, rollback, observability, and policy—require additional systems such as Portainer, Kubernetes, or custom agents. Each adds binaries, processes, and operational overhead that must be managed on or alongside the device.Atym is designed as a unified system. The Runtime and Hub are built to work seamlessly together, and orchestration is part of the platform rather than an add-on. This removes an entire software tier and eliminates the associated per-device overhead.

3. Rootless by default. The Atym runtime agent runs as an unprivileged user, with no root-owned daemon on the device. Containers are launched using rootless semantics, leveraging user namespaces to map container root to a non-privileged host user.Docker’s default model relies on a root-owned daemon, with rootless operation available but not standard. Podman defaults to rootless, which is a meaningful advantage, though it retains a full local runtime model. The security implications are significant. A compromised workload cannot escalate through a root daemon that does not exist. A vulnerability in the agent exposes a smaller attack surface with fewer escalation paths. In fleets of devices operating in physically accessible or network-exposed environments, this default materially reduces risk.

Footprint in practice

The architectural decisions above are abstract until they’re reflected in diagrams and benchmarking. The graphics below illustrates the structure of each stack, including what runs on and off the device.

Docker keeps a persistent root daemon at the top of the on-device stack, with dockerd driving containerd and runc underneath. Podman replaces that daemon with an executable that is invoked on demand rather than sitting resident in memory, backed by conmon, crun, and a rootless networking helper. Atym places a small, unprivileged agent at the top of a much shorter stack and moves image resolution, policy, and orchestration off the device entirely to the Atym Hub.

The following table represents a like-for-like comparison of Atym vis-à-vis Docker and Podman.

| Atym | Docker | Podman |

Additional background daemon | No (small Atym agent only) | Yes (dockerd) | No |

Agent / daemon runs as root | No | Yes (by default) | N/A (no daemon) |

Built-in fleet orchestration | Yes (Atym Hub) | No — add Portainer, Swarm, K8s, etc. | No — add K8s or Cockpit |

On-device OCI client responsibilities | Minimal (resolution and planning handled by central Hub) | Full — daemon does everything locally | Full — CLI + local image store |

OCI runtime engine | crun | runc (default) | crun or runc |

Runtime install size (adder over base Linux footprint) | 11.75 MB | 341.3 MB (full)222.3 MB (minimal) | 134.2 MB |

Idle resident memory | 10.1 MB | 119.1 MB | 0 MB |

Measurements taken on a dual-core ARMv8 system with 2GB of RAM running Ubuntu 24.04.

It’s also important to distinguish between runtime overhead and workload footprint. The memory and storage consumed by application containers are independent of the runtime: for example, an nginx container has the same footprint regardless of whether it runs on Atym, Docker, or Podman. What differs is the baseline “tax” of the platform itself—and, critically, whether it enables the devices to the device has sufficient headroom to run containers at all.

In conclusion

Atym’s footprint advantage comes from three primary sources, in decreasing order of impact: the offloaded control plane and orchestration layer (work that’s not resident on the device), the absence of a root-owned, full-featured daemon, and the reduced on-device runtime surface—no build subsystem, no local CLI, and no redundant image-management logic.

On hardware where Docker and Podman are viable—typically ARMv8 systems with 2 GB of RAM or more—Atym provides OCI compatibility with materially lower memory overhead, integrated fleet orchestration, and rootless operation by default. Developers can leverage their existing container images, registries, and deployment workflows while freeing up 100-200MB of memory on every device in their fleet.

Meanwhile, Atym makes containerization possible on hardware that Docker and Podman have never been viable for. This includes Linux-capable systems with as little as 128MB of RAM, and with the Zephyr-based runtime variant, MCU-based devices that have as little as 256KB of memory.

Atym is the ideal container orchestration platform for organizations seeking to streamline engineering and operations across highly heterogenous fleets of embeded devices and systems. Stay tuned for deeper dives into specific aspects of the solution such as container deployment, campaign management, centralized troubleshooting, and the migration path from Linux to Wasm containers on the same device.

In the meantime, you can take Atym for a spin through our free evaluation program. If you’d like to get more deeply involved in shaping the future of the technology and related standards, we welcome community contributions to the open source Ocre project in LF Edge, which is the heart of the Atym Runtime.

Comments